Nimbus Forecast: From Manual yr.no Lookups to a Deployed Platform at DCCMS

Jimmy Matewere

Jimmy MatewereThe first Sunday, they asked me to collect rainfall forecasts from yr.no for 99 stations. The workflow was manual: open the site, search each coordinate, read the 7-day totals, log the numbers. I got through the first 10 and was genuinely annoyed. Not frustrated in a general way, annoyed in the specific way you get when something feels like it has no business being done by hand. The site had an API. That was enough information. I finished the task, then I told myself I was never doing it again.

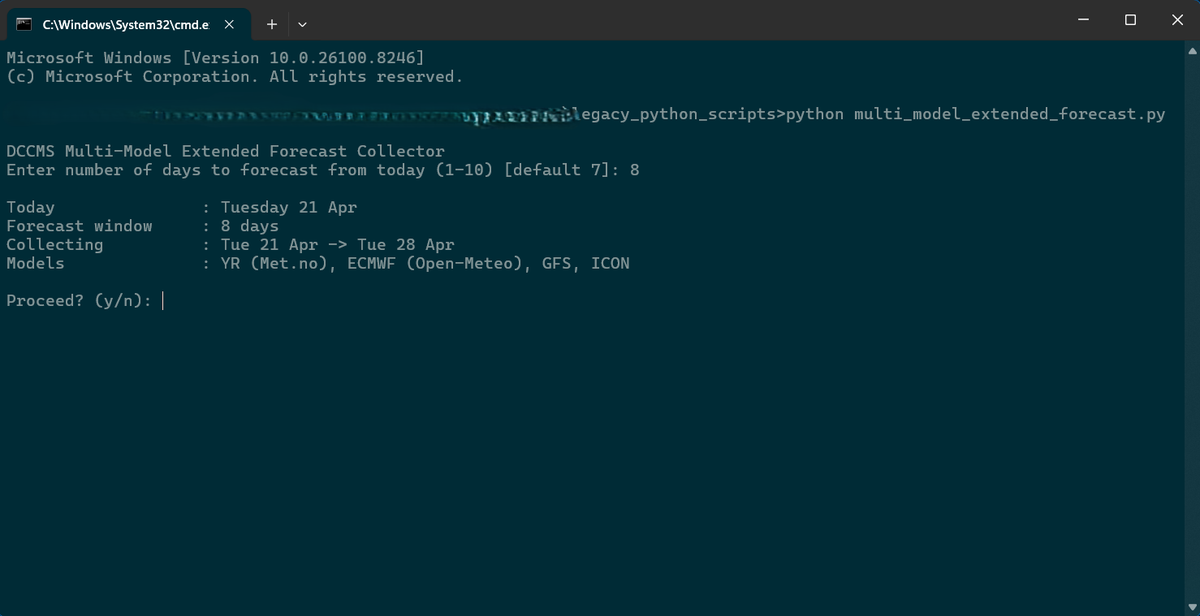

The first version of the script was messy. Timezone issues, day-boundary misattribution, summing logic that would quietly produce the wrong totals without signalling anything was wrong. It took several iterations before I trusted the output. By the time it was stable, it handled up to 10 days of forecast, checked whether an output file for that date range already existed before writing, and asked whether you wanted to start from today or tomorrow. Small decisions, but each one had a reason. The prompts existed because I'd run it wrong enough times that I'd automated the sanity checks I was doing manually.

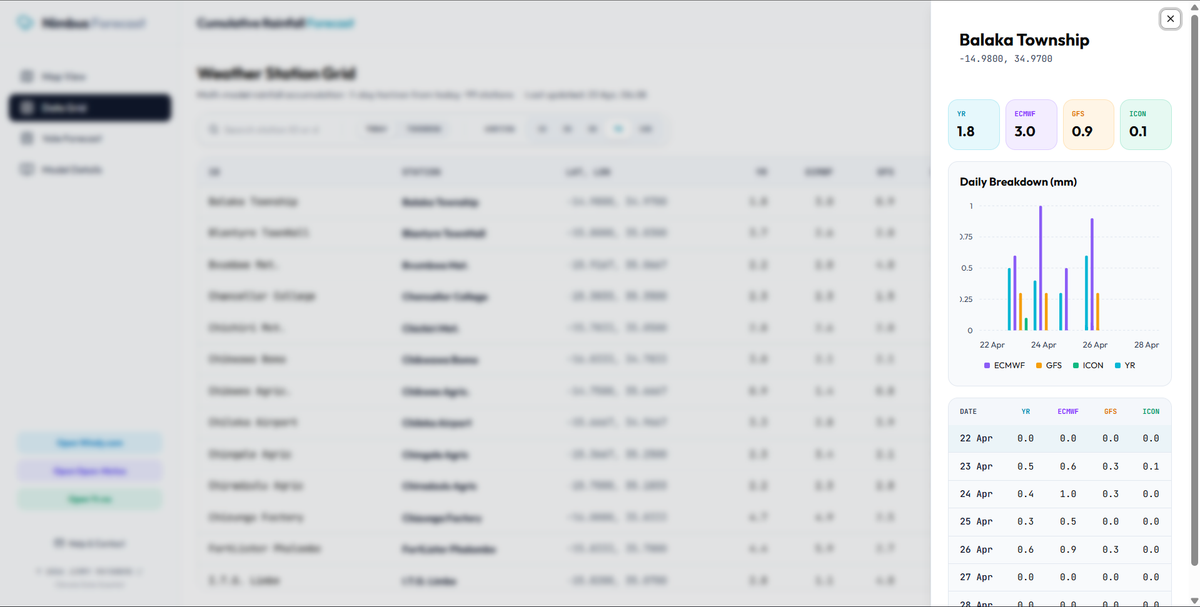

The multimodel version came after. YR, ECMWF, GFS, ICON. Four sources, one output. The Open-Meteo models were clean: API values matched their own platform exactly. YR was different. Days 1 to 3 of the daily breakdown would show small variances against what yr.no displayed on the site, usually under 2mm, then stabilize from day 4 onward. I understood why eventually: the near-term forecast uses a denser hourly timestep, and if you query close to a model update cycle, you catch a partial run. Not a bug, just how the API works. Under 2mm I could live with.

The scripts worked. People were using them. I still couldn't leave it there.

Part of it was that the script required a terminal. That's fine for me, less fine for colleagues who don't live in VS Code. Part of it was that the multimodel output was still a CSV that fed an Excel file that fed a bulletin. There were too many steps between the data and the decision. But if I'm honest, part of it was also that I wanted to see how far I could take it. The scripts were a solution. The platform was a question about what the solution could become.

The initial plan had a Python backend on GCP. I scrapped it quickly. What was happening under the hood was API calls, data extraction, storage, display. Next.js handles all of that. Adding GCP was adding infrastructure for infrastructure's sake, and GCP's free-tier cold-start delays would have made the thing slower. I stayed in TypeScript and never looked back.

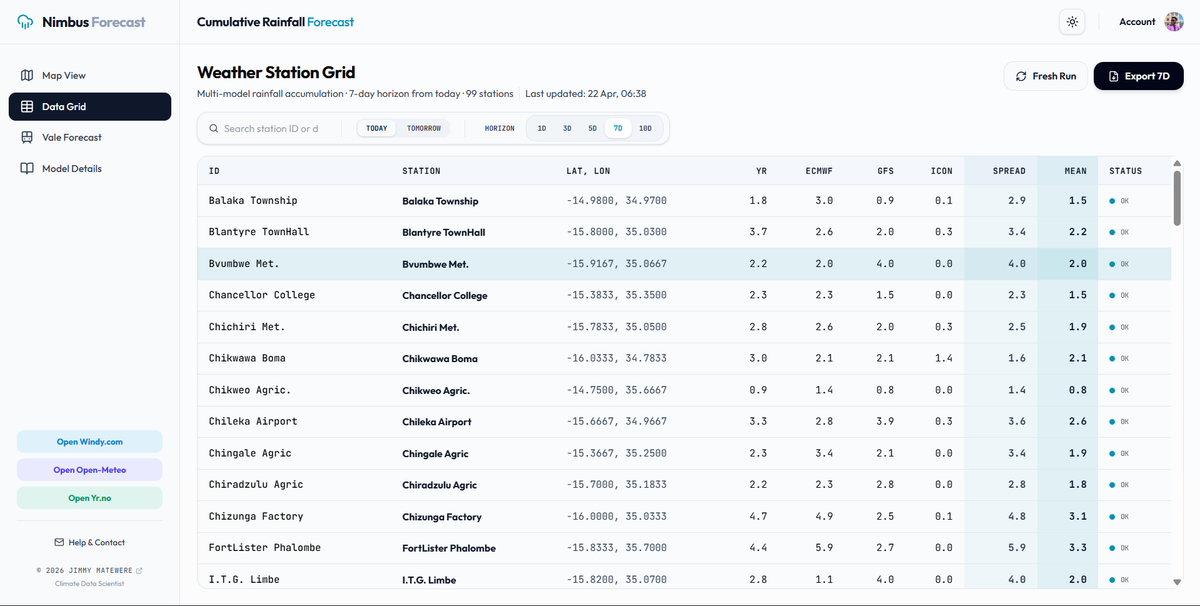

The platform went through more iterations than I can clearly sequence. Async concurrency for the API calls, because fetching 99 stations sequentially was too slow. A mutex lock in the database to prevent two concurrent ingestion runs from deleting and rewriting simultaneously. The correct timezone handling, which turned out to be harder than expected: Node's Intl engine on some Vercel serverless runtimes injects invisible Unicode characters into date strings that silently break Postgres parameter binding. I ended up doing UTC+2 manually, just arithmetic, to get a string Postgres would accept without complaint.

The serverless execution constraint was the other thing localhost didn't show me. On a local dev environment, ingestion runs slowly but it runs. On Vercel, a serverless function has a default 15-second execution limit. I found out the hard way: multiple 504s on preview deployments, watching the logs in one browser window while using the platform in another. The fix was async batching, grouping stations into concurrent sets rather than hammering all 99 at once, and setting an explicit maxDuration on the repair endpoint. The mutex lock came from a related problem. Two concurrent Fresh Run requests could both start deleting and rewriting the database simultaneously, which produced stations with no data. A timestamp-based lock in the config table resolved it: if a run started less than three minutes ago, the next request gets a 409.

The map came together. The station detail drawer. The daily breakdown chart showing all four models side by side.

At some point I ran validation across all 99 stations after a fresh ingestion and got 100% parity on the Open-Meteo models. YR matched within the margins I'd already accepted.

The cron runs at 04:00 UTC, 06:00 Malawi time. By 07:30 when someone opens the platform the data is already there. The intention was a quick scan first, then a Fresh Run if something looked off. Not arriving to yesterday's numbers.

A few weeks ago it became part of the actual workflow, not a demo, not a pilot, the actual Monday morning process. I was happy. The kind of happy that's quiet. My work was being used at DCCMS. The feedback that came back was useful.

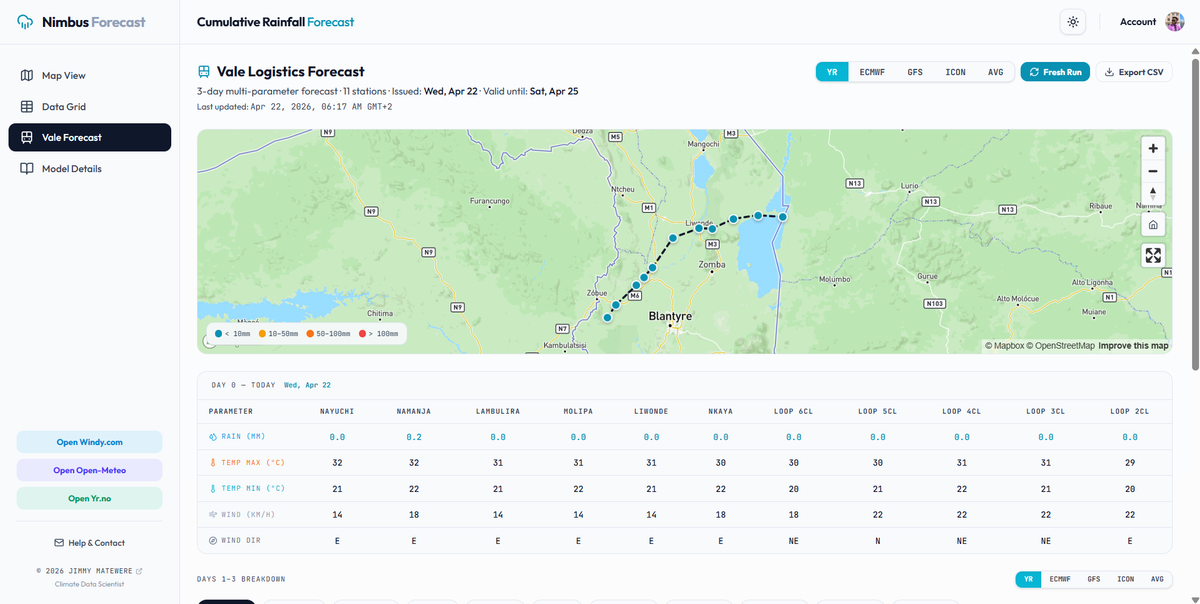

The Vale Logistics portal came from seeing where the friction still was. The rainfall picture was useful, but the workflow around it still had manual steps: wind direction looked up separately, temperatures pulled from somewhere else, values typed into an Excel template by hand. Eleven railway corridor stations, parameters the main platform wasn't storing, a CSV export formatted exactly to match the bulletin column order. That extension wasn't in any original plan and it wasn't a small addition, it meant a separate database table, a separate ingestion pipeline, a separate cron, and a UI that matched the structure of the printed bulletin rather than the national grid. The same impulse that moved me from the script to the platform moved me here. If the friction is visible, it's worth going after.

The platform will keep growing. Five-day regional forecasts across Malawi's climate zones sit next on the list. That one still waits on someone manually assigning all 99 stations to their forecast regions in the CMS before any code can be written. I'll do it eventually.

Continue the Conversation

I welcome peer perspectives and questions regarding any of the topics discussed.